Teaching AI Concepts

I’m providing a short online training course on various AI Concepts for a couple of hours each afternoon this week for a Fintech company.

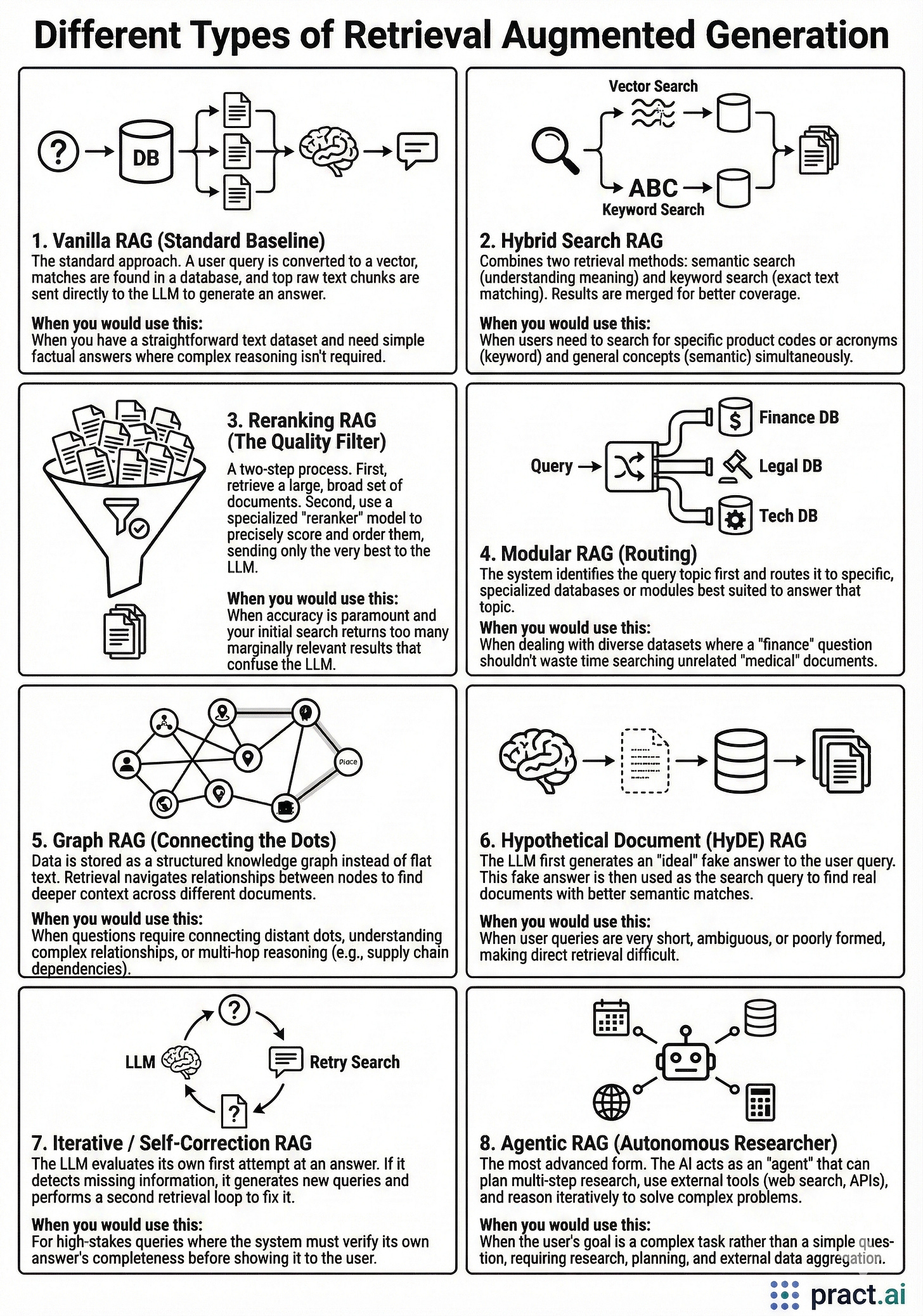

The below handout is part of a set of handouts in which foundational AI concepts are covered, with the handout below cataloging the more well known RAG patterns as a reference.

The culmination of these short sessions is the team building their own private knowledge system, using a local LLM, to interact with their documents.

To get to that stage we will also be covering a chunking strategy ie. how they split their files (by paragraph, by heading, fixed token windows, overlap size etc) and we will be working with a fixed data set so it is easy for them to validate if their system is working.

We’ll also take a look a the large context window approach as an alternative to RAG as with context windows now reaching 128K–200K tokens (and Gemini at 1–2M), many small-to-medium document collections can fit entirely in context. For a team with, say, 50 pages of internal policies, long context could actually be a better answer in some cases.

Once they realize the model only sees what’s in the prompt (nothing more, nothing less) the need for retrieval becomes more obvious, and nothing teaches better than watching the system confidently return the wrong document or hallucinate a policy that doesn’t exist, and it then becomes fun to drill down into the ‘why’.